A Step-by-Step Guide to Modernizing Community Search with Hybrid Retrieval and Automated Evaluation

Introduction

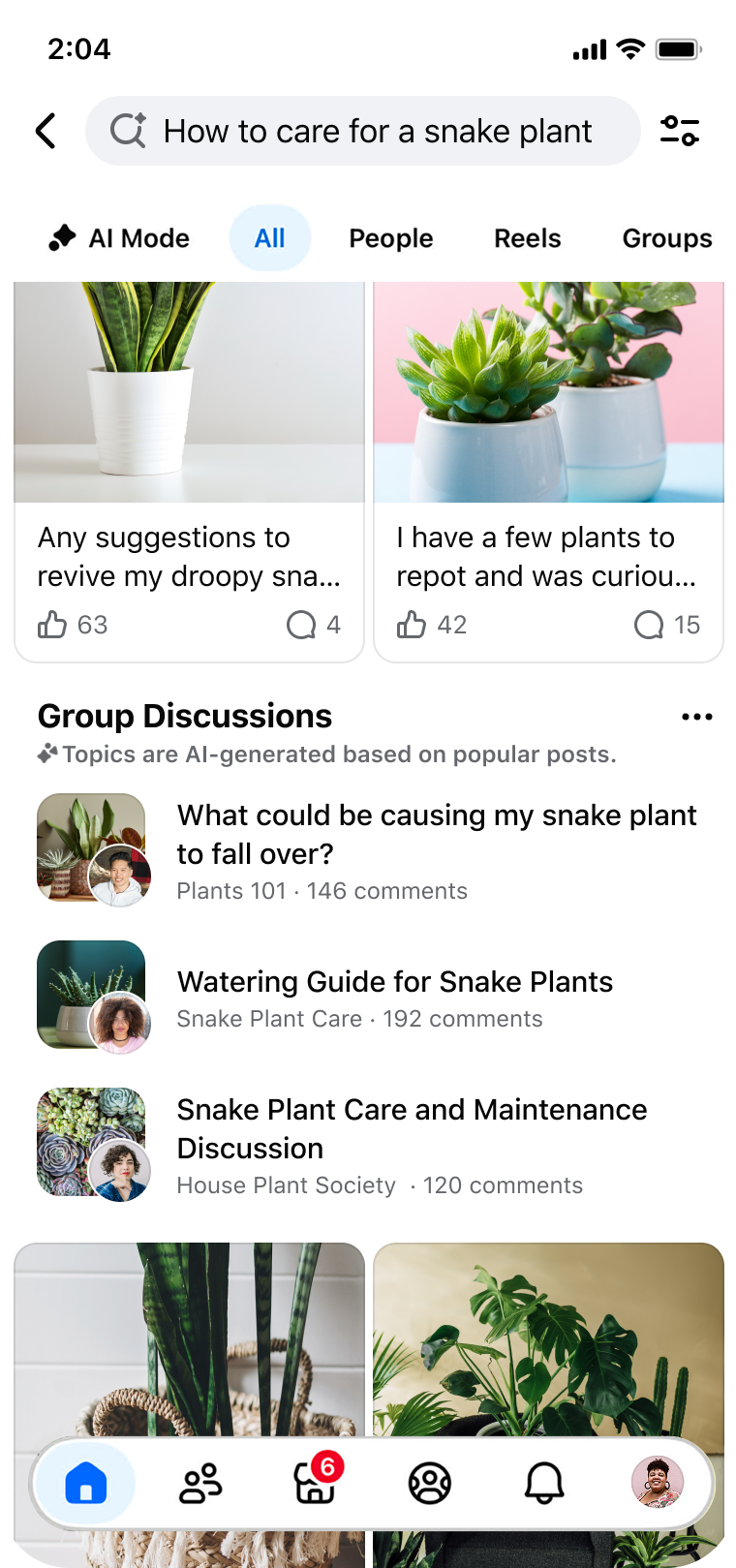

Community knowledge is a goldmine, but searching through it often feels like panning for nuggets in a river of mud. Whether you're running a Facebook Group or any large online community, you've likely experienced the frustration of users who can't find what they need—even when the answer is there. This guide walks you through the approach Facebook used to transform its Groups search: moving beyond simple keyword matching to a hybrid retrieval system backed by automated evaluation. By following these steps, you can unlock the power of community knowledge for your own platform.

What You Need

- A search index or database of community posts and comments

- Access to a semantic search model (e.g., a trained transformer like BERT)

- An existing lexical search system (e.g., Elasticsearch with BM25)

- Labeled test queries or a set of representative user intents

- Evaluation framework (e.g., relevance judges or click-through logs)

- Compute resources for model training/inference

- A/B testing infrastructure to measure engagement and error rates

Step 1: Identify the Three Core Friction Points in Community Search

Before making any changes, you must understand exactly where users struggle. Facebook identified three major friction points:

- Discovery – The gap between natural language intent and exact keyword matches. For instance, searching for “small individual cakes with frosting” yields zero results if the community uses the word “cupcakes.”

- Consumption – Even when relevant content is found, users have to scroll through dozens of comments to piece together an answer—what Facebook calls the “effort tax.”

- Validation – Users need trusted community wisdom to make decisions (e.g., “Is this vintage Corvette a good buy?”), but that wisdom is scattered across group discussions.

Document how these friction points manifest in your own community by analyzing search logs, conducting user interviews, and tracking abandonment rates.

Step 2: Implement a Hybrid Retrieval Architecture

Traditional lexical search (e.g., keyword matching) is fast but brittle. Pure semantic search is powerful but computationally heavy and can miss exact matches. The solution is a hybrid approach that combines both.

2.1 Keep your existing lexical index

Lexical systems like BM25 are excellent for matching specific terms and phrases. Retain them as the first layer to handle straightforward queries.

2.2 Add a semantic retrieval layer

Train or fine-tune a neural encoder (e.g., Sentence-BERT) to map queries and documents into a shared embedding space. When a user types “Italian coffee drink,” the semantic model will retrieve posts about “cappuccino” even if the word “coffee” never appears.

2.3 Merge results with a scoring mechanism

Combine lexical and semantic scores using a weighted formula or a learning-to-rank model. This ensures that both precise keyword hits and conceptual matches are surfaced. Facebook's architecture re-ranks results to prioritize relevance without sacrificing speed.

Step 3: Deploy Automated Model-Based Evaluation

To validate that your hybrid system actually improves things—without introducing new errors—you need automated evaluation.

3.1 Create a test set of realistic queries

Gather a diverse set of user queries that represent the three friction points. For example, queries that require synonym understanding (“small cakes” → “cupcakes”), queries that demand summary answers (“snake plant watering tips”), and queries for product validation (“vintage Corvette pros/cons”).

3.2 Define relevance metrics

Choose metrics like NDCG (Normalized Discounted Cumulative Gain) or Mean Reciprocal Rank. For each query, human judges rate the relevance of top results from both old and new systems.

3.3 Train a model to predict relevance

Facebook implemented an automated model that mimics human judgments. This allows rapid iteration: you can run thousands of evaluation cycles without manual effort. The model learns to flag discrepancies, such as when a perfectly relevant post shows low in rankings.

3.4 Monitor error rates

Track false positives (irrelevant results pushed to the top) and false negatives (relevant results omitted). Facebook reported tangible improvements in search engagement and relevance with no increase in error rates—a critical benchmark.

Step 4: Iterate and Scale with Continuous Feedback

Once the hybrid system is live, treat it as a living project.

- Gather implicit signals: Click-through rates, time spent on result pages, and follow-up queries reveal user satisfaction.

- Retrain models periodically: Language evolves; new topics emerge. Schedule regular retraining of your semantic encoder and evaluation model.

- Run A/B experiments: Compare the hybrid system against the old lexical baseline. Facebook noted a measurable lift in search engagement while maintaining error rates.

- Extend to consumption and validation: Consider adding answer extraction or summarization features to reduce the effort tax. For validation, surface aggregated opinions (e.g., “80% of members recommend this”).

Tips for Success

- Start small: Pilot your hybrid retrieval on a single, active group before rolling out platform-wide.

- Balance cost vs. quality: Semantic retrieval can be expensive; cache embeddings and use approximate nearest neighbor (ANN) indexes to keep latency low.

- Don't forget the “effort tax”: Even great retrieval is useless if users still have to read 50 comments. Explore ways to condense community knowledge into digestible snippets.

- Validate with real users: Automated evaluation is a proxy. Always cross-check with user satisfaction surveys.

- Document your learnings: Facebook published a paper on their approach. Sharing your experience can help others and build community trust.

By following these four steps—identifying friction points, adopting hybrid retrieval, automating evaluation, and iterating—you can modernize community search and help users discover, consume, and validate the collective wisdom that makes groups so powerful.

Related Articles

- Seamlessly Combine Packages from Different Linux Distributions with Distrobox

- Hidden Android TV Setting Restores Lightning-Fast Performance in Seconds

- How to Safely Combine Packages from Different Linux Distributions

- Building Friendly Online Spaces: Insights from the Vienna Circle

- AI Debate Turns Violent: Judge Scolds Musk and Altman as Attack on Altman's Home Highlights Growing Divide

- Critical Role Cast Braces for Fatal Consequences as Campaign 4 Intensifies

- 10 Reasons Why the AI Debate Is Pushing Everyone Over the Edge

- Revolutionizing Facebook Groups Search: A Hybrid Approach to Unlocking Community Wisdom