Anthropic’s Claude Code Auto Mode: Streamlining Development with Autonomous Workflows and Human Oversight

Software development has long been a delicate dance between automation and human judgment. Anthropic’s Claude Code now introduces auto mode, a feature designed to handle multi-step coding tasks with minimal manual intervention while embedding robust safety gates. This article explores how the system balances autonomous execution with human oversight, the layered safety mechanisms it employs, and what this means for developers.

What Is Claude Code Auto Mode?

Auto mode is a new operational setting within Claude Code that allows the AI to perform multi-step software development workflows—from writing code to testing and debugging—without requiring constant human input. Unlike earlier iterations where each step needed explicit approval, auto mode enables a more fluid, efficient process while still maintaining control over sensitive actions.

The feature is particularly useful for repetitive or well-defined tasks, such as refactoring, implementing standard APIs, or running automated tests. By reducing the back-and-forth, developers can focus on higher-level design and architecture.

How the Autonomous Workflow Works

When auto mode is activated, Claude Code can execute a sequence of actions—like editing files, running commands, and checking results—in a single, coordinated flow. The system plans the steps internally, executes them, and verifies outcomes before proceeding. However, this autonomy is not absolute.

Multi-Step Execution with Reduced Intervention

In auto mode, the AI handles the bulk of the development pipeline. For example, it might:

- Read the current codebase to understand context

- Generate new code or modify existing files

- Run unit tests or build scripts

- Iterate based on error messages or test failures

Each step is performed automatically, but the system logs every action for later review. Developers can intervene at any point or let the workflow complete, then inspect the final result. This reduces friction for routine tasks while keeping the developer in the loop.

Layered Safety Mechanisms

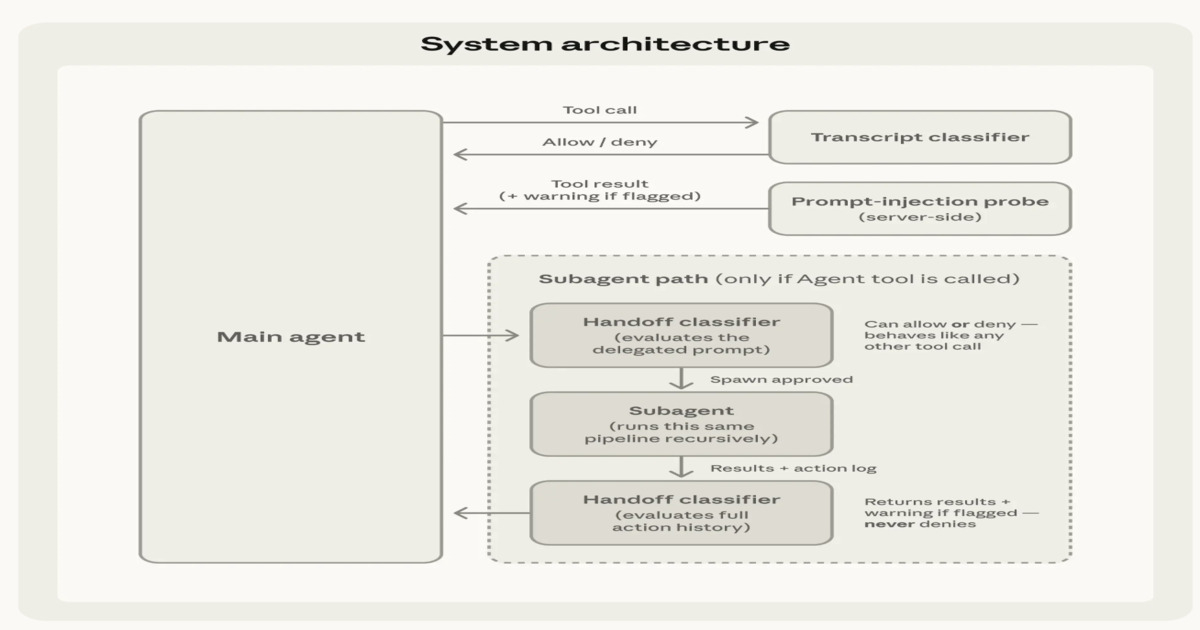

Anthropic has built multiple layers of safety into auto mode to prevent unintended consequences. These mechanisms operate before, during, and after execution to ensure the AI stays within acceptable boundaries.

Input Filtering and Action Evaluation

Before any action is taken, the system filters input for harmful or out-of-scope commands. If a request attempts to modify critical system files, access private data, or execute dangerous operations, the filter blocks it immediately. Additionally, the AI evaluates each proposed action against a set of rules—if an action is deemed risky (e.g., deleting a database column), it requires human approval even if auto mode is active.

Two-Stage Classification

Actions are classified in two stages. First, a pre-execution classifier determines the general risk level of the task. Second, a post-execution classifier reviews the outcome to catch any issues that might have been missed. This dual-check system helps catch edge cases where an action seems safe but produces unsafe results.

For example, modifying a configuration file might pass the first stage but fail the second if it introduces a security vulnerability. In such cases, the AI can roll back the change or flag it for human review.

/presentations/game-vr-flat-screens/en/smallimage/thumbnail-1775637585504.jpg)

Human Approval Checkpoints for Sensitive Operations

Despite the autonomy, critical operations always require a human gate. Anthropic defines sensitive actions as those that could cause significant impact: deploying to production, modifying authentication systems, deleting large data sets, or altering payment processing code. When Claude Code encounters such an operation, it pauses and requests explicit confirmation.

This human-in-the-loop approach ensures that developers retain control over high-stakes decisions. The approval checkpoint appears within the chat interface, showing the exact change proposed and its potential consequences. Developers can approve, reject, or modify the action before continuing.

The system also maintains an audit trail of all approvals, so teams can review decisions later for compliance or post-mortem analysis.

Implications for Developers

Auto mode represents a significant step toward semi-autonomous software development. For individual developers, it means less time on boilerplate coding and more on creative problem-solving. For teams, it offers consistent execution of standard procedures, reducing variability and human error.

However, the technology also raises questions about trust and oversight. Developers must learn to verify AI-generated code rather than blindly accept it. Anthropic’s layered safety mechanisms—especially the two-stage classification and human approval gates—address many concerns, but they do not eliminate the need for human judgment.

As auto mode evolves, it could reshape how software is built, making rapid prototyping and iterative development even more accessible. The key is maintaining a balance where the AI augments human capability without replacing human accountability.

Conclusion

Anthropic’s Claude Code auto mode combines efficiency with safety, enabling developers to delegate multi-step workflows to an AI while keeping a watchful eye on critical decisions. The feature’s input filters, action evaluation, and two-stage classification provide a robust safety net, and the human approval checkpoints ensure that sensitive operations remain firmly under developer control. As autonomous coding systems become more common, this model of guided autonomy could become the standard for responsible AI-assisted development.

— Based on reporting by Leela Kumili

Related Articles

- Navigating AI-Powered Coding: An Overview of Four Agent Workflows

- Declining U.S. Birth Rate Triggers New Political Debate Over Family Supports

- Simulating High-Voltage Phenomena: From Corona Testing to Submarine Cable Fields

- Maximizing Performance: A Setup Guide for the ACEMAGIC F5A Mini PC with Ryzen AI HX 470

- Compare AI Models Instantly: ChatPlayground AI Q&A

- Platform Engineering at GitHub: A Q&A Guide to Solving Infrastructure Challenges

- Switching from Vim to Helix: A Practical Guide to Built-in Language Servers and More

- Docker Offload GA: Unleashing Docker Desktop Across Every Enterprise Environment